All in One View

Content from Before we start

Last updated on 2026-04-28 | Edit this page

Overview

Questions

- What is R and RStudio?

- What is a working directory?

- How should files be set up to import into R?

- How can I look for help with R functions?

Objectives

- Explain what R and RStudio are, what they are used for, and how they relate to each other.

- Describe the purpose of the RStudio Script, Console, Environment, and Plots panes.

- Organize files and directories for a set of analyses as an R Project, and understand the purpose of the working directory.

- Use the built-in RStudio help interface to search for more information on R functions.

- Demonstrate how to provide sufficient information for troubleshooting with the R user community.

What is R? What is RStudio?

The term “R” is used to refer to both the programming

language and the software that interprets the scripts written using

it.

RStudio is a popular way to write R scripts and interact with the R software. To function correctly, RStudio needs R and therefore both need to be installed on your computer.

Why learn R?

R does not involve lots of pointing and clicking, and that’s a good thing

In R, the results of your analysis rely on a series of written commands, and not on remembering a succession of pointing and clicking. That is a good thing! So, if you want to redo your analysis because you collected more data, you don’t have to remember which button you clicked in which order to obtain your results. With a stored series of commands in an R script, you can repeat running them and R will process the new dataset exactly the same way as before.

Working with scripts makes the steps you used in your analysis clear, and the code you write can be inspected by someone else who can give you feedback and spot mistakes.

Working with scripts forces you to have a deeper understanding of what you are doing, and facilitates your learning and comprehension of the methods you use.

R code is great for reproducibility

Reproducibility is when someone else, including your future self, can obtain the same results from the same dataset when using the same analysis.

R integrates with other tools to generate manuscripts from your code. If you collect more data, or fix a mistake in your dataset, the figures and the statistical tests in your manuscript are updated automatically.

R is widely used in academia and in industries such as pharma and biotech. These organisations expect analyses to be reproducible, so knowing R will give you an edge with these requirements.

R is interdisciplinary and extensible

With 10,000+ packages that can be installed to extend its capabilities, R provides a framework that allows you to combine statistical approaches from many scientific disciplines to best suit the analytical framework you need to analyze your data. For instance, R has packages for image analysis, GIS, time series, population genetics, and a lot more.

R works on data of all shapes and sizes

The skills you learn with R scale easily with the size of your dataset. Whether your dataset has hundreds or millions of lines, it won’t make much difference to you.

R is designed for data analysis. It comes with special data structures and data types that make handling of missing data and statistical factors convenient.

R can connect to spreadsheets, databases, and many other data formats, on your computer or on the web.

R produces high-quality graphics

The plotting functionalities in R are endless, and allow you to adjust any aspect of your graph to visualize your data more effectively.

R has a large and welcoming community

Thousands of people use R daily. Many of them are willing to help you through mailing lists and websites such as Stack Overflow, RStudio community, and Slack channels such as the R for Data Science online community (https://www.rfordatasci.com/). In addition, there are numerous online and in person meetups organised globally through organisations such as R Ladies Global (https://rladies.org/).

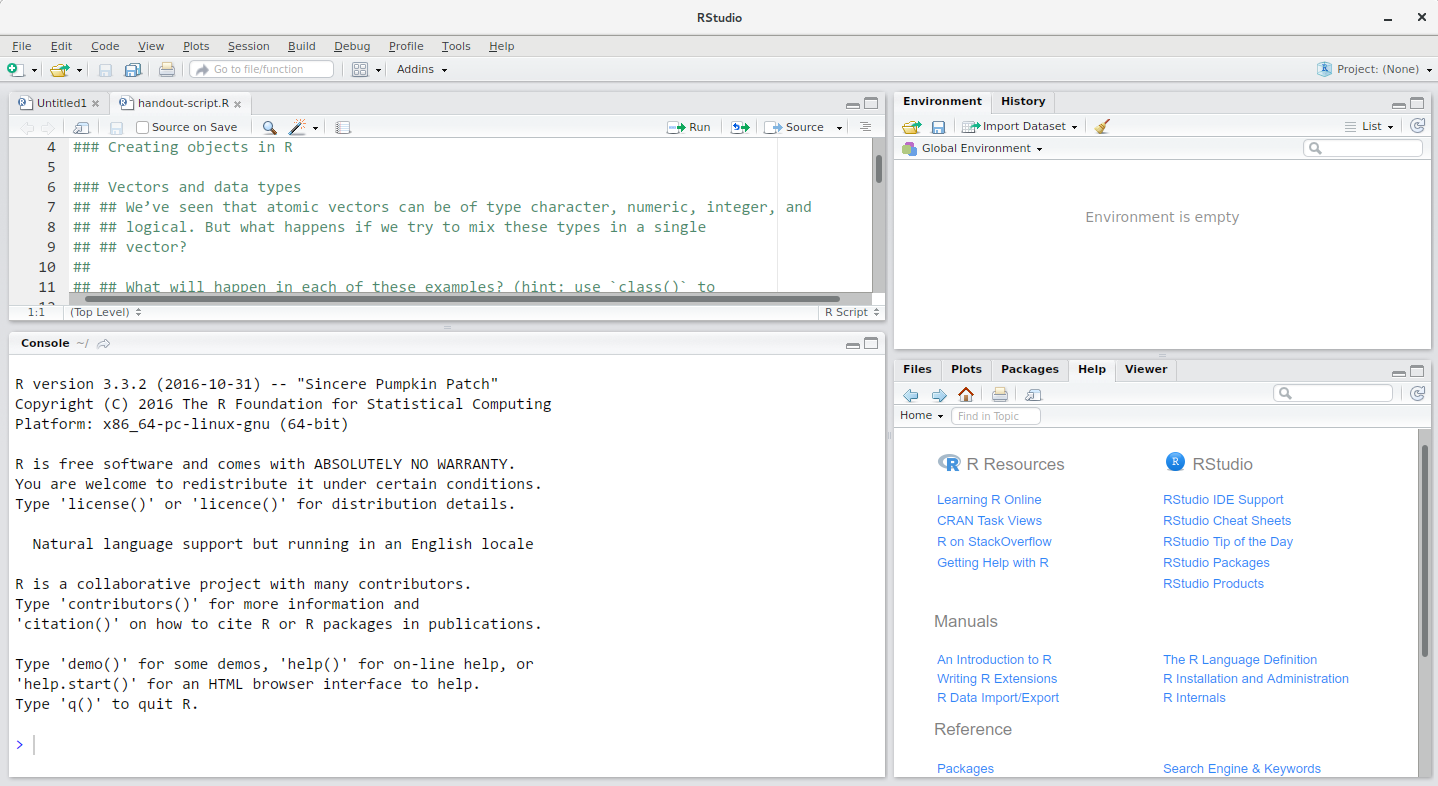

Knowing your way around RStudio

Let’s start by learning about RStudio, which is an Integrated Development Environment (IDE) for working with R.

The RStudio IDE open-source product is free under the Affero General Public License (AGPL) v3. The RStudio IDE is also available with a commercial license and priority email support from RStudio, PBC.

We will use RStudio IDE to write code, navigate the files on our computer, inspect the variables we are going to create, and visualize the plots we will generate. RStudio can also be used for other things (e.g., version control, developing packages, writing Shiny apps) that we will not cover during the workshop.

RStudio is divided into 4 “panes”:

- The Source for your scripts and documents (top-left, in the default layout)

- Your Environment/History (top-right) which shows all the objects in your working space (Environment) and your command history (History)

- Your Files/Plots/Packages/Help/Viewer (bottom-right)

- The R Console (bottom-left)

The placement of these panes and their content can be customized (see menu, Tools -> Global Options -> Pane Layout). For ease of use, settings such as background color, font color, font size, and zoom level can also be adjusted in this menu (Global Options -> Appearance).

One of the advantages of using RStudio is that all the information you need to write code is available in a single window. Additionally, with many shortcuts, autocompletion, and highlighting for the major file types you use while developing in R, RStudio will make typing easier and less error-prone.

Getting set up

It is good practice to keep a set of related data, analyses, and text self-contained in a single folder, called the working directory. All of the scripts within this folder can then use relative paths to files that indicate where inside the project a file is located (as opposed to absolute paths, which point to where a file is on a specific computer). Working this way allows you to move your project around on your computer and share it with others without worrying about whether or not the underlying scripts will still work.

RStudio provides a helpful set of tools to do this through its “Projects” interface, which not only creates a working directory for you, but also remembers its location (allowing you to quickly navigate to it) and optionally preserves custom settings and (re-)open files to assist resume work after a break. Go through the steps for creating an “R Project” for this tutorial below.

- Start RStudio.

- Under the

Filemenu, click onNew Project. ChooseNew Directory, thenNew Project. - Enter a name for this new folder (or “directory”), and choose a

convenient location for it. This will be your working

directory for the rest of the day (e.g.,

~/data-carpentry). - Click on

Create Project. - Download the code handout, place

it in your working directory and rename it (e.g.,

data-carpentry-script.R). - (Optional) Set Preferences to ‘Never’ save workspace in RStudio.

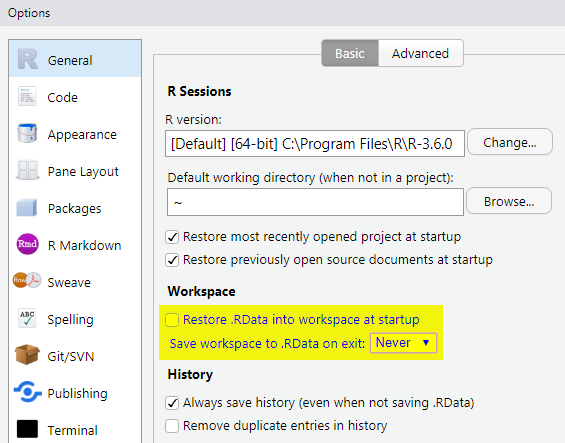

A workspace is your current working environment in R which includes

any user-defined object. By default, all of these objects will be saved,

and automatically loaded, when you reopen your project. Saving a

workspace to .RData can be cumbersome, especially if you

are working with larger datasets, and it can lead to hard to debug

errors by having objects in memory you forgot you had. Therefore, it is

often a good idea to turn this off. To do so, go to Tools –> ‘Global

Options’ and select the ‘Never’ option for ‘Save workspace to .RData’ on

exit.’

Organizing your working directory

Using a consistent folder structure across your projects will help keep things organized, and will help you to find/file things in the future. This can be especially helpful when you have multiple projects. In general, you may create directories (folders) for scripts, data, and documents.

-

data_raw/&data/Use these folders to store raw data and intermediate datasets you may create for the need of a particular analysis. For the sake of transparency and provenance, you should always keep a copy of your raw data accessible and do as much of your data cleanup and preprocessing programmatically (i.e., with scripts, rather than manually) as possible. Separating raw data from processed data is also a good idea. For example, you could have filesdata_raw/tree_survey.plot1.txtand...plot2.txtkept separate from adata/tree.survey.csvfile generated by thescripts/01.preprocess.tree_survey.Rscript. -

documents/This would be a place to keep outlines, drafts, and other text. -

scripts/This would be the location to keep your R scripts for different analyses or plotting, and potentially a separate folder for your functions (more on that later). - Additional (sub)directories depending on your project needs.

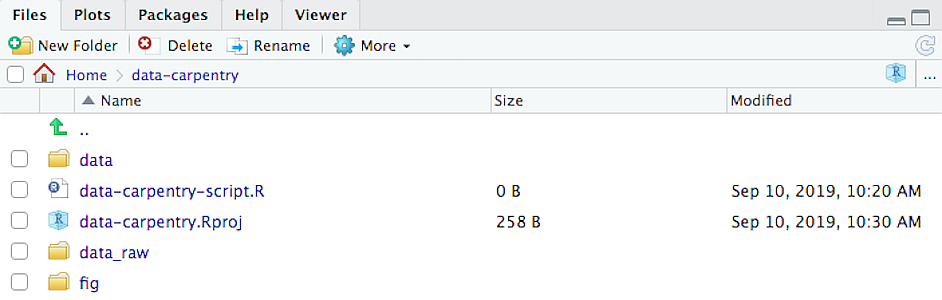

For this workshop, we will need a data_raw/ folder to

store our raw data, and we will use data/ for when we learn

how to export data as CSV files, and a fig/ folder for the

figures that we will save.

- Under the

Filestab on the right of the screen, click onNew Folderand create a folder nameddata_rawwithin your newly created working directory (e.g.,~/data-carpentry/). (Alternatively, typedir.create("data_raw")at your R console.) Repeat these operations to create adataand afigfolder.

We are going to keep the script in the root of our working directory because we are only going to use one file. Later, when you start create more complex projects, it might make sense to organize scripts in sub-directories.

Your working directory should now look like this:

The working directory

The working directory is an important concept to understand. It is the place from where R will be looking for and saving the files. When you write code for your project, it should refer to files in relation to the root of your working directory and only need files within this structure.

RStudio assists you in this regard and sets the working directory

automatically to the directory where you have placed your project in. If

you need to check it, you can use getwd(). If for some

reason your working directory is not what it should be, you can change

it in the RStudio interface by navigating in the file browser where your

working directory should be, and clicking on the blue gear icon “More”,

and select “Set As Working Directory”. Alternatively you can use

setwd("/path/to/working/directory") to reset your working

directory. However, your scripts should not include this line because it

will fail on someone else’s computer.

Interacting with R

The basis of programming is that we write down instructions for the computer to follow, and then we tell the computer to follow those instructions. We write, or code, instructions in R because it is a common language that both the computer and we can understand. We call the instructions commands and we tell the computer to follow the instructions by executing (also called running) those commands.

There are two main ways of interacting with R: by using the console

or by using script files (plain text files that contain your code). The

console pane (in RStudio, the bottom left panel) is the place where

commands written in the R language can be typed and executed immediately

by the computer. It is also where the results will be shown for commands

that have been executed. You can type commands directly into the console

and press Enter to execute those commands, but they will be

forgotten when you close the session.

Because we want our code and workflow to be reproducible, it is better to type the commands we want in the script editor, and save the script. This way, there is a complete record of what we did, and anyone (including our future selves!) can easily replicate the results on their computer.

RStudio allows you to execute commands directly from the script editor by using the Ctrl + Enter shortcut (on Macs, Cmd + Return will work, too). The command on the current line in the script (indicated by the cursor) or all of the commands in the currently selected text will be sent to the console and executed when you press Ctrl + Enter. You can find other keyboard shortcuts in this RStudio cheatsheet about the RStudio IDE.

At some point in your analysis you may want to check the content of a variable or the structure of an object, without necessarily keeping a record of it in your script. You can type these commands and execute them directly in the console. RStudio provides the Ctrl + 1 and Ctrl + 2 shortcuts allow you to jump between the script and the console panes.

If R is ready to accept commands, the R console shows a

> prompt. If it receives a command (by typing,

copy-pasting or sent from the script editor using Ctrl +

Enter), R will try to execute it, and when ready, will show

the results and come back with a new > prompt to wait

for new commands.

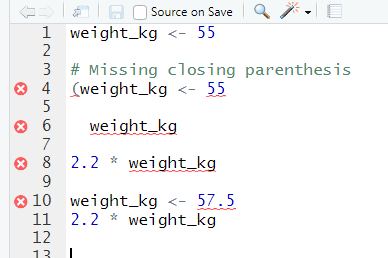

If R is still waiting for you to enter more data because it isn’t

complete yet, the console will show a + prompt. It means

that you haven’t finished entering a complete command. This is because

you have not ‘closed’ a parenthesis or quotation, i.e. you don’t have

the same number of left-parentheses as right-parentheses, or the same

number of opening and closing quotation marks. When this happens, and

you thought you finished typing your command, click inside the console

window and press Esc; this will cancel the incomplete command

and return you to the > prompt.

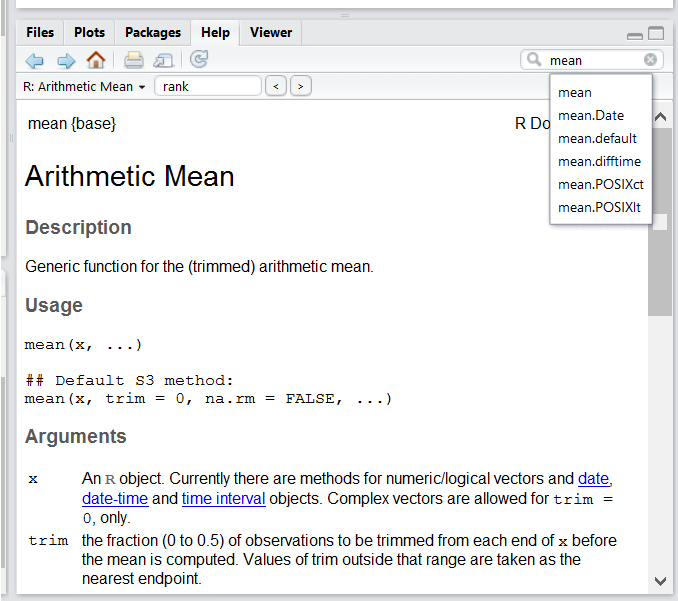

Seeking help

Searching function documentation with ? and

??

If you need help with a specific function, let’s say

mean(), you can type ?mean or press

F1 while your cursor is on the function name. If you are

looking for a function to do a particular task, but don’t know the

function name, you can use the double question mark ??, for

example ??kruskall. Both commands will open matching help

files in RStudio’s help panel in the lower right corner. You can also

use the help panel to search help directly, as seen in the

screenshot.

Automatic code completion

When you write code in RStudio, you can use its automatic code completion to remind yourself of a function’s name or arguments. Start typing the function name and pay attention to the suggestions that pop up. Use the up and down arrow to select a suggested code completion and Tab to apply it. You can also use code completion to complete function’s argument names, object, names and file names. It even works if you don’t get the spelling 100% correct.

Package vignettes and cheat sheets

In addition to the documentation for individual functions, many

packages have vignettes – instructions for how to use the

package to do certain tasks. Vignettes are great for learning by

example. Vignettes are accessible via the package help and by using the

function browseVignettes().

There is also a Help menu at the top of the RStudio window, that has cheat sheets for popular packages, RStudio keyboard shortcuts, and more.

Finding more functions and packages

RStudio’s help only searches the packages that you have installed on your machine, but there are many more available on CRAN and GitHub. To search across all available R packages, you can use the website rdocumentation.org. Often, a generic Google or internet search “R <task>” will send you to the appropriate package documentation or a forum where someone else has already asked your question. Many packages also have websites with additional help, tutorials, news and more (for example tidyverse.org).

Dealing with error messages

Don’t get discouraged if your code doesn’t run immediately! Error messages are common when programming, and fixing errors is part of any programmer’s daily work. Often, the problem is a small typo in a variable name or a missing parenthesis. Watch for the red x’s next to your code in RStudio. These may provide helpful hints about the source of the problem.

If you can’t fix an error yourself, start by googling it. Some error messages are too generic to diagnose a problem (e.g. “subscript out of bounds”). In that case it might help to include the name of the function or package you’re using in your query.

Asking for help

If your Google search is unsuccessful, you may want to ask other R users for help. There are different places where you can ask for help. During this workshop, don’t hesitate to talk to your neighbor, compare your answers, and ask for help. You might also be interested in organizing regular meetings following the workshop to keep learning from each other. If you have a friend or colleague with more experience than you, they might also be able and willing to help you.

Besides that, there are a few places on the internet that provide help:

- Stack Overflow: Many questions have already been answered, but the challenge is to use the right words in your search to find them. If your question hasn’t been answered before and is well crafted, chances are you will get an answer in less than 5 min. Remember to follow their guidelines on how to ask a good question.

- The R-help mailing list: it is used by a lot of people (including most of the R core team). If your question is valid (read its Posting Guide), you are likely to get an answer very fast, but the tone can be pretty dry and it is not always very welcoming to new users.

- If your question is about a specific package rather than a base R

function, see if there is a mailing list for the package. Usually it’s

included in the DESCRIPTION file of the package that can be accessed

using

packageDescription("<package-name>"). - You can also try to contact the package author directly, by emailing them or opening an issue on the code repository (e.g., on GitHub).

- There are also some topic-specific mailing lists (GIS, phylogenetics, etc…). The complete list is on the R mailing lists website.

The key to receiving help from someone is for them to rapidly grasp your problem. Thus, you should be as precise as possible when describing your problem and help others to pinpoint where the issue might be. Try to…

Use the correct words to describe your problem. Otherwise you might get an answer pointing to the misuse of your words rather than answering your question.

Generalize what you are trying to do, so people outside your field can understand the question.

Reduce what does not work to a simple reproducible example. For instance, instead of using your real data set, create a small generic one. For more information on how to write a reproducible example see this article from the reprex package. Learning how to use the reprex package is also very helpful for this.

Include the output of

sessionInfo()in your question. It provides information about your platform, the versions of R and the packages that you are using. As an example, here you can see the versions of R and all the packages that we are using to run the code in this lesson:

R

sessionInfo()

OUTPUT

#> R version 4.5.3 (2026-03-11)

#> Platform: x86_64-pc-linux-gnu

#> Running under: Ubuntu 22.04.5 LTS

#>

#> Matrix products: default

#> BLAS: /usr/lib/x86_64-linux-gnu/blas/libblas.so.3.10.0

#> LAPACK: /usr/lib/x86_64-linux-gnu/lapack/liblapack.so.3.10.0 LAPACK version 3.10.0

#>

#> locale:

#> [1] LC_CTYPE=C.UTF-8 LC_NUMERIC=C LC_TIME=C.UTF-8

#> [4] LC_COLLATE=C.UTF-8 LC_MONETARY=C.UTF-8 LC_MESSAGES=C.UTF-8

#> [7] LC_PAPER=C.UTF-8 LC_NAME=C LC_ADDRESS=C

#> [10] LC_TELEPHONE=C LC_MEASUREMENT=C.UTF-8 LC_IDENTIFICATION=C

#>

#> time zone: UTC

#> tzcode source: system (glibc)

#>

#> attached base packages:

#> [1] stats graphics grDevices utils datasets methods base

#>

#> other attached packages:

#> [1] RSQLite_2.3.11 lubridate_1.9.4 forcats_1.0.0 stringr_1.5.1

#> [5] dplyr_1.1.4 purrr_1.0.4 readr_2.1.5 tidyr_1.3.1

#> [9] tibble_3.2.1 ggplot2_3.5.2 tidyverse_2.0.0 knitr_1.50

#>

#> loaded via a namespace (and not attached):

#> [1] bit_4.6.0 gtable_0.3.6 compiler_4.5.3 renv_1.2.2

#> [5] tidyselect_1.2.1 blob_1.2.4 scales_1.4.0 fastmap_1.2.0

#> [9] yaml_2.3.10 R6_2.6.1 generics_0.1.3 DBI_1.2.3

#> [13] pillar_1.10.2 RColorBrewer_1.1-3 tzdb_0.5.0 rlang_1.2.0

#> [17] cachem_1.1.0 stringi_1.8.7 xfun_0.52 bit64_4.6.0-1

#> [21] memoise_2.0.1 timechange_0.4.0 cli_3.6.5 withr_3.0.2

#> [25] magrittr_2.0.3 grid_4.5.3 hms_1.1.3 lifecycle_1.0.5

#> [29] vctrs_0.7.3 evaluate_1.0.3 glue_1.8.0 farver_2.1.2

#> [33] tools_4.5.3 pkgconfig_2.0.3How to learn more after the workshop?

The material we cover during this workshop will give you a taste of how you can use R to analyze data for your own research. However, to do advanced operations such as cleaning your dataset, using statistical methods, or creating beautiful graphics you will need to learn more.

The best way to become proficient and efficient at R, as with any other tool, is to use it to address your actual research questions. As a beginner, it can feel daunting to have to write a script from scratch, and given that many people make their code available online, modifying existing code to suit your purpose might get first hands-on experience using R for your own work and help you become comfortable eventually creating your own scripts.

More resources

More about R

- The Introduction to R can also be dense for people with little programming experience but it is a good place to understand the underpinnings of the R language.

- The R FAQ is dense and technical but it is full of useful information.

- To stay up to date, follow

#rstatson twitter. Twitter can also be a way to get questions answered and learn about useful R packages and tipps (e.g., [@RLangTips])

How to ask good programming questions?

- The rOpenSci community call “How to ask questions so they get answered”, (rOpenSci site and video recording) includes a presentation of the reprex package and of its philosophy.

- blog.Revolutionanalytics.com and this blog post by Jon Skeet have comprehensive advice on how to ask programming questions.

- R is a programming language and RStudio is the IDE that assists in using R.

- There are many benefits to learning R, including writing reproducibile code, ability to use a variety of datasets, and a broad, open-source community of practioners.

- Files related to analysis should be organized within a single working directory.

- R uses commands containing functions to tell the computer what to do.

- Documentation for each function is available within RStudio, or users can ask for help from one of many online forums, cheatsheets, or email lists.

Content from Introduction to R

Last updated on 2026-04-28 | Edit this page

Overview

Questions

- How do you create objects in R?

- How do you save R code for later use?

- How do you manipulate data in R?

Objectives

- Define the following terms as they relate to R: object, assign, call, function, arguments, options.

- Create objects and assign values to them in R.

- Learn how to name objects.

- Save a script file for later use.

- Use comments to inform script.

- Solve simple arithmetic operations in R.

- Call functions and use arguments to change their default options.

- Inspect the content of vectors and manipulate their content.

- Subset and extract values from vectors.

- Analyze vectors with missing data.

Creating objects in R

You can get output from R simply by typing math in the console:

R

3 + 5

12 / 7

However, to do useful and interesting things, we need to assign

values to objects. To create an object, we need to

give it a name followed by the assignment operator <-,

and the value we want to give it:

R

weight_kg <- 55

<- is the assignment operator we will use in this

course. It assigns values on the right to objects on the left. So, after

executing x <- 3, the value of x is

3. For historical reasons, you can also use =

for assignments, but not in every context. Because of the slight

differences

in syntax, it is good practice to always use <- for

assignments.

In RStudio, typing Alt + - (push Alt

at the same time as the - key) will write <-

in a single keystroke in a PC, while typing Option +

- (push Option at the same time as the

- key) does the same in a Mac.

Objects can be given almost any name such as x,

current_temperature, or subject_id. Here are

some further guidelines on naming objects:

- You want your object names to be explicit and not too long.

- They cannot start with a number (

2xis not valid, butx2is). - R is case sensitive, so for example,

weight_kgis different fromWeight_kg. - There are some names that cannot be used because they are the names

of fundamental functions in R (e.g.,

if,else,for, see here for a complete list). In general, even if it’s allowed, it’s best to not use other function names (e.g.,c,T,mean,data,df,weights). If in doubt, check the help to see if the name is already in use. - It’s best to avoid dots (

.) within names. Many function names in R itself have them and dots also have a special meaning (methods) in R and other programming languages. To avoid confusion, don’t include dots in names. - It is recommended to use nouns for object names and verbs for function names.

- Be consistent in the styling of your code, such as where you put

spaces, how you name objects, etc. Styles can include “lower_snake”,

“UPPER_SNAKE”, “lowerCamelCase”, “UpperCamelCase”, etc. Using a

consistent coding style makes your code clearer to read for your future

self and your collaborators. In R, three popular style guides come from

Google, Jean Fan and the tidyverse. The tidyverse style

is very comprehensive and may seem overwhelming at first. You can

install the

lintrpackage to automatically check for issues in the styling of your code.

Objects vs. variables

What are known as objects in R are known as

variables in many other programming languages. Depending on

the context, object and variable can have

drastically different meanings. However, in this lesson, the two words

are used synonymously. For more information see: https://cran.r-project.org/doc/manuals/r-release/R-lang.html#Objects

When assigning a value to an object, R does not print anything. You can force R to print the value by using parentheses or by typing the object name:

R

weight_kg <- 55 # doesn't print anything

(weight_kg <- 55) # but putting parenthesis around the call prints the value of `weight_kg`

weight_kg # and so does typing the name of the object

Now that R has weight_kg in memory, we can do arithmetic

with it. For instance, we may want to convert this weight into pounds

(weight in pounds is 2.2 times the weight in kg):

R

2.2 * weight_kg

We can also change an object’s value by assigning it a new one:

R

weight_kg <- 57.5

2.2 * weight_kg

This means that assigning a value to one object does not change the

values of other objects. For example, let’s store the animal’s weight in

pounds in a new object, weight_lb:

R

weight_lb <- 2.2 * weight_kg

and then change weight_kg to 100.

R

weight_kg <- 100

What do you think is the current content of the object

weight_lb? 126.5 or 220?

Saving your code

Up to now, your code has been in the console. This is useful for

quick queries but not so helpful if you want to revisit your work for

any reason. A script can be opened by pressing Ctrl +

Shift + N. It is wise to save your script file

immediately. To do this press Ctrl + S. This will

open a dialogue box where you can decide where to save your script file,

and what to name it. The .R file extension is added

automatically and ensures your file will open with RStudio.

Don’t forget to save your work periodically by pressing Ctrl + S.

Comments

The comment character in R is #. Anything to the right

of a # in a script will be ignored by R. It is useful to

leave notes and explanations in your scripts. For convenience, RStudio

provides a keyboard shortcut to comment or uncomment a paragraph: after

selecting the lines you want to comment, press at the same time on your

keyboard Ctrl + Shift + C. If you only

want to comment out one line, you can put the cursor at any location of

that line (i.e. no need to select the whole line), then press

Ctrl + Shift + C.

Challenge

What are the values after each statement in the following?

R

mass <- 47.5 # mass?

age <- 122 # age?

mass <- mass * 2.0 # mass?

age <- age - 20 # age?

mass_index <- mass/age # mass_index?

Functions and their arguments

Functions are “canned scripts” that automate more complicated sets of

commands including operations assignments, etc. Many functions are

predefined, or can be made available by importing R packages

(more on that later). A function usually takes one or more inputs called

arguments. Functions often (but not always) return a

value. A typical example would be the function

sqrt(). The input (the argument) must be a number, and the

return value (in fact, the output) is the square root of that number.

Executing a function (‘running it’) is called calling the

function. An example of a function call is:

R

weight_kg <- sqrt(10)

Here, the value of 10 is given to the sqrt() function,

the sqrt() function calculates the square root, and returns

the value which is then assigned to the object weight_kg.

This function takes one argument, other functions might take

several.

The return ‘value’ of a function need not be numerical (like that of

sqrt()), and it also does not need to be a single item: it

can be a set of things, or even a dataset. We’ll see that when we read

data files into R.

Arguments can be anything, not only numbers or filenames, but also other objects. Exactly what each argument means differs per function, and must be looked up in the documentation (see below). Some functions take arguments which may either be specified by the user, or, if left out, take on a default value: these are called options. Options are typically used to alter the way the function operates, such as whether it ignores ‘bad values’, or what symbol to use in a plot. However, if you want something specific, you can specify a value of your choice which will be used instead of the default.

Let’s try a function that can take multiple arguments:

round().

R

round(3.14159)

OUTPUT

#> [1] 3Here, we’ve called round() with just one argument,

3.14159, and it has returned the value 3.

That’s because the default is to round to the nearest whole number. If

we want more digits we can see how to do that by getting information

about the round function. We can use

args(round) to find what arguments it takes, or look at the

help for this function using ?round.

R

args(round)

OUTPUT

#> function (x, digits = 0, ...)

#> NULLR

?round

We see that if we want a different number of digits, we can type

digits = 2 or however many we want.

R

round(3.14159, digits = 2)

OUTPUT

#> [1] 3.14If you provide the arguments in the exact same order as they are defined you don’t have to name them:

R

round(3.14159, 2)

OUTPUT

#> [1] 3.14And if you do name the arguments, you can switch their order:

R

round(digits = 2, x = 3.14159)

OUTPUT

#> [1] 3.14It’s good practice to put the non-optional arguments (like the number you’re rounding) first in your function call, and to then specify the names of all optional arguments. If you don’t, someone reading your code might have to look up the definition of a function with unfamiliar arguments to understand what you’re doing.

Vectors and data types

A vector is the most common and basic data type in R, and is pretty

much the workhorse of R. A vector is composed by a series of values,

which can be either numbers or characters. We can assign a series of

values to a vector using the c() function. For example we

can create a vector of animal weights and assign it to a new object

weight_g:

R

weight_g <- c(50, 60, 65, 82)

weight_g

A vector can also contain characters:

R

animals <- c("mouse", "rat", "dog")

animals

The quotes around “mouse”, “rat”, etc. are essential here. Without

the quotes R will assume objects have been created called

mouse, rat and dog. As these

objects don’t exist in R’s memory, there will be an error message.

There are many functions that allow you to inspect the content of a

vector. length() tells you how many elements are in a

particular vector:

R

length(weight_g)

length(animals)

An important feature of a vector, is that all of the elements are the

same type of data. The function class() indicates what kind

of object you are working with:

R

class(weight_g)

class(animals)

The function str() provides an overview of the structure

of an object and its elements. It is a useful function when working with

large and complex objects:

R

str(weight_g)

str(animals)

You can use the c() function to add other elements to

your vector:

R

weight_g <- c(weight_g, 90) # add to the end of the vector

weight_g <- c(30, weight_g) # add to the beginning of the vector

weight_g

In the first line, we take the original vector weight_g,

add the value 90 to the end of it, and save the result back

into weight_g. Then we add the value 30 to the

beginning, again saving the result back into weight_g.

We can do this over and over again to grow a vector, or assemble a dataset. As we program, this may be useful to add results that we are collecting or calculating.

An atomic vector is the simplest R data

type and is a linear vector of a single type. Above, we saw 2

of the 6 main atomic vector types that R uses:

"character" and "numeric" (or

"double"). These are the basic building blocks that all R

objects are built from. The other 4 atomic vector types

are:

-

"logical"forTRUEandFALSE(the boolean data type) -

"integer"for integer numbers (e.g.,2L, theLindicates to R that it’s an integer) -

"complex"to represent complex numbers with real and imaginary parts (e.g.,1 + 4i) and that’s all we’re going to say about them -

"raw"for bitstreams that we won’t discuss further

You can check the type of your vector using the typeof()

function and inputting your vector as the argument.

Vectors are one of the many data structures that R

uses. Other important ones are lists (list), matrices

(matrix), data frames (data.frame), factors

(factor) and arrays (array).

Challenge

- We’ve seen that atomic vectors can be of type character, numeric (or double), integer, and logical. But what happens if we try to mix these types in a single vector?

R implicitly converts them to all be the same type

Challenge (continued)

-

What will happen in each of these examples? (hint: use

class()to check the data type of your objects):R

num_char <- c(1, 2, 3, "a") num_logical <- c(1, 2, 3, TRUE) char_logical <- c("a", "b", "c", TRUE) tricky <- c(1, 2, 3, "4") Why do you think it happens?

Vectors can be of only one data type. R tries to convert (coerce) the content of this vector to find a “common denominator” that doesn’t lose any information.

Challenge (continued)

-

How many values in

combined_logicalare"TRUE"(as a character) in the following example (reusing the 2..._logicals from above):R

combined_logical <- c(num_logical, char_logical)

Only one. There is no memory of past data types, and the coercion

happens the first time the vector is evaluated. Therefore, the

TRUE in num_logical gets converted into a

1 before it gets converted into "1" in

combined_logical.

Challenge (continued)

- You’ve probably noticed that objects of different types get converted into a single, shared type within a vector. In R, we call converting objects from one class into another class coercion. These conversions happen according to a hierarchy, whereby some types get preferentially coerced into other types. Can you draw a diagram that represents the hierarchy of how these data types are coerced?

logical → numeric → character ← logical

Subsetting vectors

If we want to extract one or several values from a vector, we must provide one or several indices in square brackets. For instance:

R

animals <- c("mouse", "rat", "dog", "cat")

animals[2]

OUTPUT

#> [1] "rat"R

animals[c(3, 2)]

OUTPUT

#> [1] "dog" "rat"We can also repeat the indices to create an object with more elements than the original one:

R

more_animals <- animals[c(1, 2, 3, 2, 1, 4)]

more_animals

OUTPUT

#> [1] "mouse" "rat" "dog" "rat" "mouse" "cat"R indices start at 1. Programming languages like Fortran, MATLAB, Julia, and R start counting at 1, because that’s what human beings typically do. Languages in the C family (including C++, Java, Perl, and Python) count from 0 because that’s simpler for computers to do.

Conditional subsetting

Another common way of subsetting is by using a logical vector.

TRUE will select the element with the same index, while

FALSE will not:

R

weight_g <- c(21, 34, 39, 54, 55)

weight_g[c(TRUE, FALSE, FALSE, TRUE, TRUE)]

OUTPUT

#> [1] 21 54 55Typically, these logical vectors are not typed by hand, but are the output of other functions or logical tests. For instance, if you wanted to select only the values above 50:

R

weight_g > 50 # will return logicals with TRUE for the indices that meet the condition

OUTPUT

#> [1] FALSE FALSE FALSE TRUE TRUER

## so we can use this to select only the values above 50

weight_g[weight_g > 50]

OUTPUT

#> [1] 54 55You can combine multiple tests using & (both

conditions are true, AND) or | (at least one of the

conditions is true, OR):

R

weight_g[weight_g > 30 & weight_g < 50]

OUTPUT

#> [1] 34 39R

weight_g[weight_g <= 30 | weight_g == 55]

OUTPUT

#> [1] 21 55R

weight_g[weight_g >= 30 & weight_g == 21]

OUTPUT

#> numeric(0)Here, > for “greater than”, < stands

for “less than”, <= for “less than or equal to”, and

== for “equal to”. The double equal sign == is

a test for numerical equality between the left and right hand sides, and

should not be confused with the single = sign, which

performs variable assignment (similar to <-).

A common task is to search for certain strings in a vector. One could

use the “or” operator | to test for equality to multiple

values, but this can quickly become tedious. The function

%in% allows you to test if any of the elements of a search

vector are found:

R

animals <- c("mouse", "rat", "dog", "cat", "cat")

# return both rat and cat

animals[animals == "cat" | animals == "rat"]

OUTPUT

#> [1] "rat" "cat" "cat"R

# return a logical vector that is TRUE for the elements within animals

# that are found in the character vector and FALSE for those that are not

animals %in% c("rat", "cat", "dog", "duck", "goat", "bird", "fish")

OUTPUT

#> [1] FALSE TRUE TRUE TRUE TRUER

# use the logical vector created by %in% to return elements from animals

# that are found in the character vector

animals[animals %in% c("rat", "cat", "dog", "duck", "goat", "bird", "fish")]

OUTPUT

#> [1] "rat" "dog" "cat" "cat"Challenge (optional)

- Can you figure out why

"four" > "five"returnsTRUE?

When using “>” or “<” on strings, R compares their alphabetical order. Here “four” comes after “five”, and therefore is “greater than” it.

Missing data

As R was designed to analyze datasets, it includes the concept of

missing data (which is uncommon in other programming languages). Missing

data are represented in vectors as NA.

When doing operations on numbers, most functions will return

NA if the data you are working with include missing values.

This feature makes it harder to overlook the cases where you are dealing

with missing data. You can add the argument na.rm = TRUE to

calculate the result as if the missing values were removed

(rm stands for ReMoved) first.

R

heights <- c(2, 4, 4, NA, 6)

mean(heights)

max(heights)

mean(heights, na.rm = TRUE)

max(heights, na.rm = TRUE)

If your data include missing values, you may want to become familiar

with the functions is.na(), na.omit(), and

complete.cases(). See below for examples.

R

## Extract those elements which are not missing values.

heights[!is.na(heights)]

## Returns the object with incomplete cases removed.

#The returned object is an atomic vector of type `"numeric"` (or #`"double"`).

na.omit(heights)

## Extract those elements which are complete cases.

#The returned object is an atomic vector of type `"numeric"` (or #`"double"`).

heights[complete.cases(heights)]

Recall that you can use the typeof() function to find

the type of your atomic vector.

Challenge

- Using this vector of heights in inches, create a new vector,

heights_no_na, with the NAs removed.

R

heights <- c(63, 69, 60, 65, NA, 68, 61, 70, 61, 59, 64, 69, 63, 63, NA, 72, 65, 64, 70, 63, 65)

Use the function

median()to calculate the median of theheightsvector.Use R to figure out how many people in the set are taller than 67 inches.

R

heights <- c(63, 69, 60, 65, NA, 68, 61, 70, 61, 59, 64, 69, 63, 63, NA, 72, 65, 64, 70, 63, 65)

# 1.

heights_no_na <- heights[!is.na(heights)]

# or

heights_no_na <- na.omit(heights)

# or

heights_no_na <- heights[complete.cases(heights)]

# 2.

median(heights, na.rm = TRUE)

# 3.

heights_above_67 <- heights_no_na[heights_no_na > 67]

length(heights_above_67)

Now that we have learned how to write scripts, and the basics of R’s data structures, we are ready to start working with the Portal dataset we have been using in the other lessons, and learn about data frames.

-

<-is used to assign values on the right to objects on the left - Code should be saved within the Source pane in RStudio to help you

return to your code later.

- ‘#’ can be used to add comments to your code.

- Functions can automate more complicated sets of commands, and require arguments as inputs.

- Vectors are composed by a series of values and can take many forms.

- Data structures in R include ‘vector’, ‘list’, ‘matrix’, ‘data.frame’, ‘factor’, and ‘array’.

- Vectors can be subset by indexing or through logical vectors.

- Many functions exist to remove missing data from data structures.

Content from Starting with data

Last updated on 2026-04-28 | Edit this page

Overview

Questions

- What is a data.frame?

- How can I read a complete csv file into R?

- How can I get basic summary information about my dataset?

- How can extract specific information from a dataframe?

- What are factors, and how are they different from other datatypes?

- How can I rename factors?

- How are dates represented in R and how can I change the format?

Objectives

- Load external data from a .csv file into a data frame.

- Install and load packages.

- Describe what a data frame is.

- Summarize the contents of a data frame.

- Use indexing to subset specific portions of data frames.

- Describe what a factor is.

- Convert between strings and factors.

- Reorder and rename factors.

- Change how character strings are handled in a data frame.

- Format dates.

Loading the survey data

We are investigating the animal species diversity and weights found within plots at our study site. The dataset is stored as a comma separated value (CSV) file. Each row holds information for a single animal, and the columns represent:

| Column | Description |

|---|---|

| record_id | Unique id for the observation |

| month | month of observation |

| day | day of observation |

| year | year of observation |

| plot_id | ID of a particular experimental plot of land |

| species_id | 2-letter code |

| sex | sex of animal (“M”, “F”) |

| hindfoot_length | length of the hindfoot in mm |

| weight | weight of the animal in grams |

| genus | genus of animal |

| species | species of animal |

| taxon | e.g. Rodent, Reptile, Bird, Rabbit |

| plot_type | type of plot |

Downloading the data

We created the folder that will store the downloaded data

(data_raw) in the chapter “Before

we start”. If you skipped that part, it may be a good idea to have a

look now, to make sure your working directory is set up properly.

We are going to use the R function download.file() to

download the CSV file that contains the survey data from Figshare, and

we will use read_csv() to load the content of the CSV file

into R.

Inside the download.file command, the first entry is a

character string with the source URL (“https://ndownloader.figshare.com/files/2292169”). This

source URL downloads a CSV file from figshare. The text after the comma

(“data_raw/portal_data_joined.csv”) is the destination of the file on

your local machine. You’ll need to have a folder on your machine called

“data_raw” where you’ll download the file. So this command downloads a

file from Figshare, names it “portal_data_joined.csv” and adds it to a

preexisting folder named “data_raw”.

R

download.file(url = "https://ndownloader.figshare.com/files/2292169",

destfile = "data_raw/portal_data_joined.csv")

Reading the data into R

The file has now been downloaded to the destination you specified,

but R has not yet loaded the data from the file into memory. To do this,

we can use the read_csv() function from the

tidyverse package.

Packages in R are basically sets of additional functions that let you

do more stuff. The functions we’ve been using so far, like

round(), sqrt(), or c() come

built into R. Packages give you access to additional functions beyond

base R. A similar function to read_csv() from the tidyverse

package is read.csv() from base R. We don’t have time to

cover their differences but notice that the exact spelling determines

which function is used. Before you use a package for the first time you

need to install it on your machine, and then you should import it in

every subsequent R session when you need it.

To install the tidyverse package, we

can type install.packages("tidyverse") straight into the

console. In fact, it’s better to write this in the console than in our

script for any package, as there’s no need to re-install packages every

time we run the script. Then, to load the package type:

R

## load the tidyverse packages, incl. dplyr

library(tidyverse)

Now we can use the functions from the

tidyverse package. Let’s use

read_csv() to read the data into a data frame (we will

learn more about data frames later):

R

surveys <- read_csv("data_raw/portal_data_joined.csv")

OUTPUT

#> Rows: 34786 Columns: 13

#> ── Column specification ────────────────────────────────────────────────────────

#> Delimiter: ","

#> chr (6): species_id, sex, genus, species, taxa, plot_type

#> dbl (7): record_id, month, day, year, plot_id, hindfoot_length, weight

#>

#> ℹ Use `spec()` to retrieve the full column specification for this data.

#> ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.When you execute read_csv on a data file, it looks

through the first 1000 rows of each column and guesses its data type.

For example, in this dataset, read_csv() reads

weight as col_double (a numeric data type),

and species as col_character. You have the

option to specify the data type for a column manually by using the

col_types argument in read_csv.

Note

read_csv() assumes that fields are delineated by commas.

However, in several countries, the comma is used as a decimal separator

and the semicolon (;) is used as a field delineator. If you want to read

in this type of files in R, you can use the read_csv2()

function. It behaves like read_csv() but uses different

parameters for the decimal and the field separators. There is also the

read_tsv() for tab separated data files and

read_delim() for less common formats. Check out the help

for read_csv() by typing ?read_csv to learn

more.

In addition to the above versions of the csv format, you should develop the habits of looking at and recording some parameters of your csv files. For instance, the character encoding, control characters used for line ending, date format (if the date is not split into three variables), and the presence of unexpected newlines are important characteristics of your data files. Those parameters will ease up the import step of your data in R.

We can see the contents of the first few lines of the data by typing

its name: surveys. By default, this will show you as many

rows and columns of the data as fit on your screen. If you wanted the

first 50 rows, you could type print(surveys, n = 50)

We can also extract the first few lines of this data using the

function head():

R

head(surveys)

OUTPUT

#> # A tibble: 6 × 13

#> record_id month day year plot_id species_id sex hindfoot_length weight

#> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <chr> <dbl> <dbl>

#> 1 1 7 16 1977 2 NL M 32 NA

#> 2 72 8 19 1977 2 NL M 31 NA

#> 3 224 9 13 1977 2 NL <NA> NA NA

#> 4 266 10 16 1977 2 NL <NA> NA NA

#> 5 349 11 12 1977 2 NL <NA> NA NA

#> 6 363 11 12 1977 2 NL <NA> NA NA

#> # ℹ 4 more variables: genus <chr>, species <chr>, taxa <chr>, plot_type <chr>Unlike the print() function, head() returns

the extracted data. You could use it to assign the first 100 rows of

surveys to an object using

surveys_sample <- head(surveys, 100). This can be useful

if you want to try out complex computations on a subset of your data

before you apply them to the whole data set. There is a similar function

that lets you extract the last few lines of the data set. It is called

(you might have guessed it) tail().

To open the dataset in RStudio’s Data Viewer, use the

view() function:

R

view(surveys)

Note

There are two functions for viewing which are case-sensitive. Using

view() with a lowercase ‘v’ is part of tidyverse, whereas

using View() with an uppercase ‘V’ is loaded through base R

in the utils package.

What are data frames?

When we loaded the data into R, it got stored as an object of class

tibble, which is a special kind of data frame (the

difference is not important for our purposes, but you can learn more

about tibbles here). Data

frames are the de facto data structure for most tabular data,

and what we use for statistics and plotting. Data frames can be created

by hand, but most commonly they are generated by functions like

read_csv(); in other words, when importing spreadsheets

from your hard drive or the web.

A data frame is the representation of data in the format of a table where the columns are vectors that all have the same length. Because columns are vectors, each column must contain a single type of data (e.g., characters, integers, factors). For example, here is a figure depicting a data frame comprising a numeric, a character, and a logical vector.

We can see this also when inspecting the structure of a data

frame with the function str():

R

str(surveys)

Inspecting data frames

We already saw how the functions head() and

str() can be useful to check the content and the structure

of a data frame. Here is a non-exhaustive list of functions to get a

sense of the content/structure of the data. Let’s try them out!

-

Size:

-

dim(surveys)- returns a vector with the number of rows in the first element, and the number of columns as the second element (the dimensions of the object) -

nrow(surveys)- returns the number of rows -

ncol(surveys)- returns the number of columns

-

-

Content:

-

head(surveys)- shows the first 6 rows -

tail(surveys)- shows the last 6 rows

-

-

Names:

-

names(surveys)- returns the column names (synonym ofcolnames()fordata.frameobjects) -

rownames(surveys)- returns the row names

-

-

Summary:

-

str(surveys)- structure of the object and information about the class, length and content of each column -

summary(surveys)- summary statistics for each column

-

Note: most of these functions are “generic”, they can be used on

other types of objects besides data.frame.

Challenge

Based on the output of str(surveys), can you answer the

following questions?

- What is the class of the object

surveys? - How many rows and how many columns are in this object?

R

str(surveys)

OUTPUT

#> spc_tbl_ [34,786 × 13] (S3: spec_tbl_df/tbl_df/tbl/data.frame)

#> $ record_id : num [1:34786] 1 72 224 266 349 363 435 506 588 661 ...

#> $ month : num [1:34786] 7 8 9 10 11 11 12 1 2 3 ...

#> $ day : num [1:34786] 16 19 13 16 12 12 10 8 18 11 ...

#> $ year : num [1:34786] 1977 1977 1977 1977 1977 ...

#> $ plot_id : num [1:34786] 2 2 2 2 2 2 2 2 2 2 ...

#> $ species_id : chr [1:34786] "NL" "NL" "NL" "NL" ...

#> $ sex : chr [1:34786] "M" "M" NA NA ...

#> $ hindfoot_length: num [1:34786] 32 31 NA NA NA NA NA NA NA NA ...

#> $ weight : num [1:34786] NA NA NA NA NA NA NA NA 218 NA ...

#> $ genus : chr [1:34786] "Neotoma" "Neotoma" "Neotoma" "Neotoma" ...

#> $ species : chr [1:34786] "albigula" "albigula" "albigula" "albigula" ...

#> $ taxa : chr [1:34786] "Rodent" "Rodent" "Rodent" "Rodent" ...

#> $ plot_type : chr [1:34786] "Control" "Control" "Control" "Control" ...

#> - attr(*, "spec")=

#> .. cols(

#> .. record_id = col_double(),

#> .. month = col_double(),

#> .. day = col_double(),

#> .. year = col_double(),

#> .. plot_id = col_double(),

#> .. species_id = col_character(),

#> .. sex = col_character(),

#> .. hindfoot_length = col_double(),

#> .. weight = col_double(),

#> .. genus = col_character(),

#> .. species = col_character(),

#> .. taxa = col_character(),

#> .. plot_type = col_character()

#> .. )

#> - attr(*, "problems")=<externalptr>R

## * class: data frame

## * how many rows: 34786, how many columns: 13

Indexing and subsetting data frames

Our survey data frame has rows and columns (it has 2 dimensions), if we want to extract some specific data from it, we need to specify the “coordinates” we want from it. Row numbers come first, followed by column numbers. However, note that different ways of specifying these coordinates lead to results with different classes.

R

# We can extract specific values by specifying row and column indices

# in the format:

# data_frame[row_index, column_index]

# For instance, to extract the first row and column from surveys:

surveys[1, 1]

# First row, sixth column:

surveys[1, 6]

# We can also use shortcuts to select a number of rows or columns at once

# To select all columns, leave the column index blank

# For instance, to select all columns for the first row:

surveys[1, ]

# The same shortcut works for rows --

# To select the first column across all rows:

surveys[, 1]

# An even shorter way to select first column across all rows:

surveys[1] # No comma!

# To select multiple rows or columns, use vectors!

# To select the first three rows of the 5th and 6th column

surveys[c(1, 2, 3), c(5, 6)]

# We can use the : operator to create those vectors for us:

surveys[1:3, 5:6]

# This is equivalent to head_surveys <- head(surveys)

head_surveys <- surveys[1:6, ]

# As we've seen, when working with tibbles

# subsetting with single square brackets ("[]") always returns a data frame.

# If you want a vector, use double square brackets ("[[]]")

# For instance, to get the first column as a vector:

surveys[[1]]

# To get the first value in our data frame:

surveys[[1, 1]]

: is a special function that creates numeric vectors of

integers in increasing or decreasing order, test 1:10 and

10:1 for instance.

You can also exclude certain indices of a data frame using the

“-” sign:

R

surveys[, -1] # The whole data frame, except the first column

surveys[-(7:nrow(surveys)), ] # Equivalent to head(surveys)

Data frames can be subset by calling indices (as shown previously), but also by calling their column names directly:

R

# As before, using single brackets returns a data frame:

surveys["species_id"]

surveys[, "species_id"]

# Double brackets returns a vector:

surveys[["species_id"]]

# We can also use the $ operator with column names instead of double brackets

# This returns a vector:

surveys$species_id

In RStudio, you can use the autocompletion feature to get the full and correct names of the columns.

Challenge

Create a

data.frame(surveys_200) containing only the data in row 200 of thesurveysdataset.Notice how

nrow()gave you the number of rows in adata.frame?

- Use that number to pull out just that last row from the

surveysdataset. - Compare that with what you see as the last row using

tail()to make sure it’s meeting expectations. - Pull out that last row using

nrow()instead of the row number. - Create a new data frame (

surveys_last) from that last row.

Use

nrow()to extract the row that is in the middle of the data frame. Store the content of this row in an object namedsurveys_middle.Combine

nrow()with the-notation above to reproduce the behavior ofhead(surveys), keeping just the first through 6th rows of the surveys dataset.

R

## 1.

surveys_200 <- surveys[200, ]

## 2.

# Saving `n_rows` to improve readability and reduce duplication

n_rows <- nrow(surveys)

surveys_last <- surveys[n_rows, ]

## 3.

surveys_middle <- surveys[n_rows / 2, ]

## 4.

surveys_head <- surveys[-(7:n_rows), ]

Factors

When we did str(surveys) we saw that several of the

columns consist of integers. The columns genus,

species, sex, plot_type, …

however, are of the class character. Arguably, these

columns contain categorical data, that is, they can only take on a

limited number of values.

R has a special class for working with categorical data, called

factor. Factors are very useful and actually contribute to

making R particularly well suited to working with data. So we are going

to spend a little time introducing them.

Once created, factors can only contain a pre-defined set of values, known as levels. Factors are stored as integers associated with labels and they can be ordered or unordered. While factors look (and often behave) like character vectors, they are actually treated as integer vectors by R. So you need to be very careful when treating them as strings.

When importing a data frame with read_csv(), the columns

that contain text are not automatically coerced (=converted) into the

factor data type, but once we have loaded the data we can

do the conversion using the factor() function:

R

surveys$sex <- factor(surveys$sex)

We can see that the conversion has worked by using the

summary() function again. This produces a table with the

counts for each factor level:

R

summary(surveys$sex)

By default, R always sorts levels in alphabetical order. For instance, if you have a factor with 2 levels:

R

sex <- factor(c("male", "female", "female", "male"))

R will assign 1 to the level "female" and

2 to the level "male" (because f

comes before m, even though the first element in this

vector is "male"). You can see this by using the function

levels() and you can find the number of levels using

nlevels():

R

levels(sex)

nlevels(sex)

Sometimes, the order of the factors does not matter, other times you

might want to specify the order because it is meaningful (e.g., “low”,

“medium”, “high”), it improves your visualization, or it is required by

a particular type of analysis. Here, one way to reorder our levels in

the sex vector would be:

R

sex # current order

OUTPUT

#> [1] male female female male

#> Levels: female maleR

sex <- factor(sex, levels = c("male", "female"))

sex # after re-ordering

OUTPUT

#> [1] male female female male

#> Levels: male femaleIn R’s memory, these factors are represented by integers (1, 2, 3),

but are more informative than integers because factors are self

describing: "female", "male" is more

descriptive than 1, 2. Which one is “male”?

You wouldn’t be able to tell just from the integer data. Factors, on the

other hand, have this information built in. It is particularly helpful

when there are many levels (like the species names in our example

dataset).

Challenge

Change the columns

taxaandgenusin thesurveysdata frame into a factor.Using the functions you learned before, can you find out…

- How many rabbits were observed?

- How many different genera are in the

genuscolumn?

R

surveys$taxa <- factor(surveys$taxa)

surveys$genus <- factor(surveys$genus)

summary(surveys)

nlevels(surveys$genus)

## * how many genera: There are 26 unique genera in the `genus` column.

## * how many rabbts: There are 75 rabbits in the `taxa` column.

Converting factors

If you need to convert a factor to a character vector, you use

as.character(x).

R

as.character(sex)

In some cases, you may have to convert factors where the levels

appear as numbers (such as concentration levels or years) to a numeric

vector. For instance, in one part of your analysis the years might need

to be encoded as factors (e.g., comparing average weights across years)

but in another part of your analysis they may need to be stored as

numeric values (e.g., doing math operations on the years). This

conversion from factor to numeric is a little trickier. The

as.numeric() function returns the index values of the

factor, not its levels, so it will result in an entirely new (and

unwanted in this case) set of numbers. One method to avoid this is to

convert factors to characters, and then to numbers.

Another method is to use the levels() function.

Compare:

R

year_fct <- factor(c(1990, 1983, 1977, 1998, 1990))

as.numeric(year_fct) # Wrong! And there is no warning...

as.numeric(as.character(year_fct)) # Works...

as.numeric(levels(year_fct))[year_fct] # The recommended way.

Notice that in the levels() approach, three important

steps occur:

- We obtain all the factor levels using

levels(year_fct) - We convert these levels to numeric values using

as.numeric(levels(year_fct)) - We then access these numeric values using the underlying integers of

the vector

year_fctinside the square brackets

Renaming factors

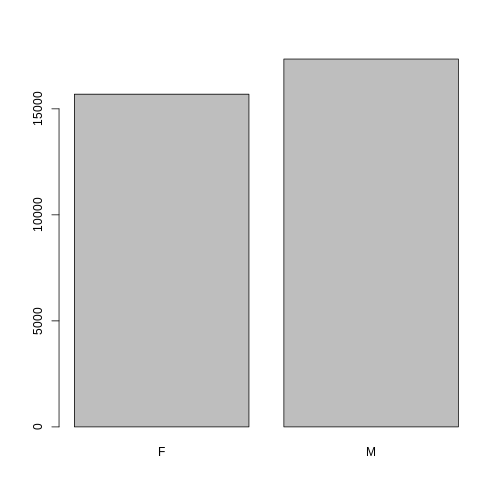

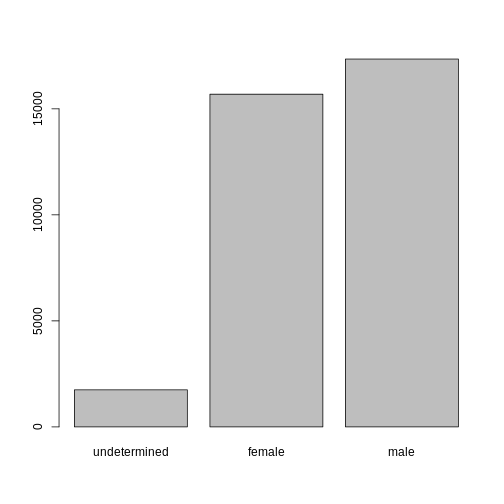

When your data is stored as a factor, you can use the

plot() function to get a quick glance at the number of

observations represented by each factor level. Let’s look at the number

of males and females captured over the course of the experiment:

R

## bar plot of the number of females and males captured during the experiment:

plot(surveys$sex)

However, as we saw when we used summary(surveys$sex),

there are about 1700 individuals for which the sex information hasn’t

been recorded. To show them in the plot, we can turn the missing values

into a factor level with the addNA() function. We will also

have to give the new factor level a label. We are going to work with a

copy of the sex column, so we’re not modifying the working

copy of the data frame:

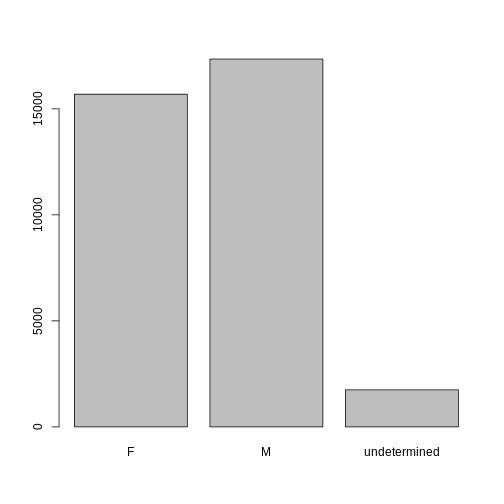

R

sex <- surveys$sex

levels(sex)

OUTPUT

#> [1] "F" "M"R

sex <- addNA(sex)

levels(sex)

OUTPUT

#> [1] "F" "M" NAR

head(sex)

OUTPUT

#> [1] M M <NA> <NA> <NA> <NA>

#> Levels: F M <NA>R

levels(sex)[3] <- "undetermined"

levels(sex)

OUTPUT

#> [1] "F" "M" "undetermined"R

head(sex)

OUTPUT

#> [1] M M undetermined undetermined undetermined

#> [6] undetermined

#> Levels: F M undeterminedNow we can plot the data again, using plot(sex).

Challenge

- Rename “F” and “M” to “female” and “male” respectively.

- Now that we have renamed the factor level to “undetermined”, can you recreate the barplot such that “undetermined” is first (before “female”)?

R

levels(sex)[1:2] <- c("female", "male")

sex <- factor(sex, levels = c("undetermined", "female", "male"))

plot(sex)

Challenge

- We have seen how data frames are created when using

read_csv(), but they can also be created by hand with thedata.frame()function. There are a few mistakes in this hand-crafteddata.frame. Can you spot and fix them? Don’t hesitate to experiment!

R

animal_data <- data.frame(

animal = c(dog, cat, sea cucumber, sea urchin),

feel = c("furry", "squishy", "spiny"),

weight = c(45, 8 1.1, 0.8)

)- Can you predict the class for each of the columns in the following

example? Check your guesses using

str(country_climate):

- Are they what you expected? Why? Why not?

- What would you need to change to ensure that each column had the accurate data type?

R

country_climate <- data.frame(

country = c("Canada", "Panama", "South Africa", "Australia"),

climate = c("cold", "hot", "temperate", "hot/temperate"),

temperature = c(10, 30, 18, "15"),

northern_hemisphere = c(TRUE, TRUE, FALSE, "FALSE"),

has_kangaroo = c(FALSE, FALSE, FALSE, 1)

)

The automatic conversion of data type is sometimes a blessing, sometimes an annoyance. Be aware that it exists, learn the rules, and double check that data you import in R are of the correct type within your data frame. If not, use it to your advantage to detect mistakes that might have been introduced during data entry (for instance, a letter in a column that should only contain numbers).

Learn more in this RStudio tutorial

Formatting dates

A common issue that new (and experienced!) R users have is converting

date and time information into a variable that is suitable for analyses.

One way to store date information is to store each component of the date

in a separate column. Using str(), we can confirm that our

data frame does indeed have a separate column for day, month, and year,

and that each of these columns contains integer values.

R

str(surveys)

We are going to use the ymd() function from the package

lubridate (which belongs to the

tidyverse; learn more here).

lubridate gets installed as part as the

tidyverse installation. When you load the

tidyverse

(library(tidyverse)), the core packages (the packages used

in most data analyses) get loaded.

lubridate however does not belong to the

core tidyverse, so you have to load it explicitly with

library(lubridate)

Start by loading the required package:

R

library(lubridate)

The lubridate package has many useful

functions for working with dates. These can help you extract dates from

different string representations, convert between timezones, calculate

time differences and more. You can find an overview of them in the lubridate

cheat sheet.

Here we will use the function ymd(), which takes a

vector representing year, month, and day, and converts it to a

Date vector. Date is a class of data

recognized by R as being a date and can be manipulated as such. The

argument that the function requires is flexible, but, as a best

practice, is a character vector formatted as “YYYY-MM-DD”.

Let’s create a date object and inspect the structure:

R

my_date <- ymd("2015-01-01")

str(my_date)

Now let’s paste the year, month, and day separately - we get the same result:

R

# sep indicates the character to use to separate each component

my_date <- ymd(paste("2015", "1", "1", sep = "-"))

str(my_date)

Now we apply this function to the surveys dataset. Create a character

vector from the year, month, and

day columns of surveys using

paste():

R

paste(surveys$year, surveys$month, surveys$day, sep = "-")

This character vector can be used as the argument for

ymd():

R

ymd(paste(surveys$year, surveys$month, surveys$day, sep = "-"))

WARNING

#> Warning: 129 failed to parse.There is a warning telling us that some dates could not be parsed

(understood) by the ymd() function. For these dates, the

function has returned NA, which means they are treated as

missing values. We will deal with this problem later, but first we add

the resulting Date vector to the surveys data

frame as a new column called date:

R

surveys$date <- ymd(paste(surveys$year, surveys$month, surveys$day, sep = "-"))

WARNING

#> Warning: 129 failed to parse.R

str(surveys) # notice the new column, with 'date' as the class

Let’s make sure everything worked correctly. One way to inspect the

new column is to use summary():

R

summary(surveys$date)

OUTPUT

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> "1977-07-16" "1984-03-12" "1990-07-22" "1990-12-15" "1997-07-29" "2002-12-31"

#> NA's

#> "129"Let’s investigate why some dates could not be parsed.

We can use the functions we saw previously to deal with missing data

to identify the rows in our data frame that are failing. If we combine

them with what we learned about subsetting data frames earlier, we can

extract the columns “year,”month”, “day” from the records that have

NA in our new column date. We will also use

head() so we don’t clutter the output:

R

missing_dates <- surveys[is.na(surveys$date), c("year", "month", "day")]

head(missing_dates)

OUTPUT

#> # A tibble: 6 × 3

#> year month day

#> <dbl> <dbl> <dbl>

#> 1 2000 9 31

#> 2 2000 4 31

#> 3 2000 4 31

#> 4 2000 4 31

#> 5 2000 4 31

#> 6 2000 9 31Why did these dates fail to parse? If you had to use these data for your analyses, how would you deal with this situation?

The answer is because the dates provided as input for the

ymd() function do not actually exist. If we refer to the

output we got above, September and April only have 30 days, not 31 days

as it is specified in our dataset.

There are several ways you could deal with situation:

- If you have access to the raw data (e.g., field sheets) or supporting information (e.g., field trip reports/logs), check them and ensure the electronic database matches the information in the original data source.

- If you are able to contact the person responsible for collecting the data, you could refer to them and ask for clarification.

- You could also check the rest of the dataset for clues about the correct value for the erroneous dates.

- If your project has guidelines on how to correct this sort of errors, refer to them and apply any recommendations.

- If it is not possible to ascertain the correct value for these observations, you may want to leave them as missing data.

Regardless of the option you choose, it is important that you document the error and the corrections (if any) that you apply to your data.

- Use

read.csvto read tabular data in R. - A data frame is the representation of data in the format of a table where the columns are vectors that all have the same length.

-

dplyrprovides many methods for inspecting and summarizing data in data frames. - Use factors to represent categorical data in R.

- The

lubridatepackage has many useful functions for working with dates.

Content from Manipulating, analyzing and exporting data with tidyverse

Last updated on 2026-04-28 | Edit this page

Overview

Questions

- What are dplyr and tidyr?

- How can I select specific rows and/or columns from a dataframe?

- How can I combine multiple commands into a single command?

- How can I create new columns or remove existing columns from a dataframe?

Objectives

- Describe the purpose of the

dplyrandtidyrpackages. - Select certain columns in a data frame with the

dplyrfunctionselect. - Extract certain rows in a data frame according to logical (boolean)

conditions with the

dplyrfunctionfilter. - Link the output of one

dplyrfunction to the input of another function with the ‘pipe’ operator%>%. - Add new columns to a data frame that are functions of existing

columns with

mutate. - Use the split-apply-combine concept for data analysis.

- Use

summarize,group_by, andcountto split a data frame into groups of observations, apply summary statistics for each group, and then combine the results. - Describe the concept of a wide and a long table format and for which purpose those formats are useful.

- Describe what key-value pairs are.

- Reshape a data frame from long to wide format and back with the

pivot_widerandpivot_longercommands from thetidyrpackage. - Export a data frame to a .csv file.

Data manipulation using dplyr and

tidyr

Bracket subsetting is handy, but it can be cumbersome and difficult

to read, especially for complicated operations. Enter

dplyr. dplyr

is a package for helping with tabular data manipulation. It pairs nicely

with tidyr which enables you to swiftly

convert between different data formats for plotting and analysis.

The tidyverse package is an

“umbrella-package” that installs tidyr,

dplyr, and several other useful packages

for data analysis, such as ggplot2,

tibble, etc.

The tidyverse package tries to address

3 common issues that arise when doing data analysis in R:

- The results from a base R function sometimes depend on the type of data.

- R expressions are used in a non standard way, which can be confusing for new learners.

- The existence of hidden arguments having default operations that new learners are not aware of.

You should already have installed and loaded the

tidyverse package. If you haven’t already

done so, you can type install.packages("tidyverse")

straight into the console. Then, type library(tidyverse) to

load the package.

What are dplyr and

tidyr?

The package dplyr provides helper tools

for the most common data manipulation tasks. It is built to work

directly with data frames, with many common tasks optimized by being

written in a compiled language (C++). An additional feature is the

ability to work directly with data stored in an external database. The

benefits of doing this are that the data can be managed natively in a

relational database, queries can be conducted on that database, and only

the results of the query are returned.

This addresses a common problem with R in that all operations are conducted in-memory and thus the amount of data you can work with is limited by available memory. The database connections essentially remove that limitation in that you can connect to a database of many hundreds of GB, conduct queries on it directly, and pull back into R only what you need for analysis.

The package tidyr addresses the common

problem of wanting to reshape your data for plotting and usage by

different R functions. For example, sometimes we want data sets where we

have one row per measurement. Other times we want a data frame where

each measurement type has its own column, and rows are instead more